KILN: Knowledge-Integrated Latent iNference

Afif Amir

A novel approach to knowledge integration that combines latent inference mechanisms with integrated knowledge representation to solve complex reasoning problems.

Abstract

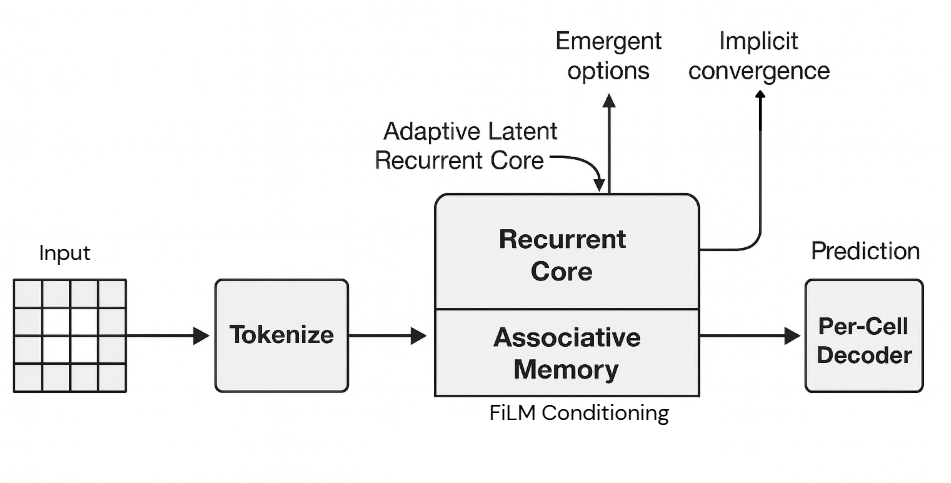

Reasoning on structured grids (ARC tasks, mazes, Sudoku) stresses a model's ability to propagate constraints, compose local rules, and allocate variable computation per instance. Large language models often lean on chain-of-thought prompting and heavy priors, which is brittle and inefficient for non-linguistic grid domains. Specialized recurrent systems such as the Hierarchical Reasoning Model (HRM) use fixed pass schedules and task-specific I/O that limit adaptability. We present KILN (Knowledge-Integrated Latent iNference), a non-Transformer, adapter-free recurrent architecture that ingests inputs through a single factorized token path (row/col/value), performs contractive iterative refinement over per-cell states via spectrally normalized MLPs, conditions inference with a shared associative memory using FiLM-style modulation, and learns variable-depth computation through ACT-style differentiable halting. A per-cell denoise-to-solve decoder aligns supervision with grid edits. We formalize the update dynamics and provide a local contraction argument for stability, outline utility-aware memory policies (gated writes/eviction) informed by modern Hopfield perspectives, and specify a lightweight curriculum for ARC-style primitives, maze pathfinding, and Sudoku constraints. As a prototype, KILN is positioned as a compact testbed intended to reduce reliance on CoT prompting and fixed schedules on variable-difficulty grids, with a forward path to text via the same unified input route.

Figure 1: KILN Architecture Overview